On Monday 3rd October, the Melbourne Section of the AES held our regular bi-monthly meeting.

We welcomed about 30 members and guests via Zoom.

Angus Davidson from Red Road Immersive studio presented to us on the topic

Recording Music for Immersive Platforms

– applying Dolby Atmos to music streaming

Chairman Graeme Huon introduced Angus who started his presentation with a brief run-through of his career to date.

He then moved on to introduce the studio design decisions necessary for Dolby Atmos mixing, covering the appropriate room size for different applications (music, video, or film work) as well as the acoustic treatment, and choices of working with a physical console or mixing “in-the-box”.

He then spoke about equipment choices, emphasising the criticality of speaker choice.

He commented that the Dolby Atmos acoustic level for music is specified at 85dBC for each speaker, which is an SPL that many speakers find difficult to generate cleanly. In this regard, he reported that he had settled on KV2 speakers which he has found to be well-suited to the application.

He then demonstrated the Dolby DARDT (Dolby Audio Room Design Tool), a spreadsheet used for room/speaker design for Atmos use. A link to a Dolby video tutorial series, which includes a download link to the spreadsheet file is at the bottom of this page.

Angus went on to speak of the use of the LFE (Low Frequency Effects) speaker, commenting that its purpose is to provide effects audio, and that Dolby and other stakeholders have specifically recommended against using the LFE channel for bass supplementation – it is not recommended for sub-woofer music content.

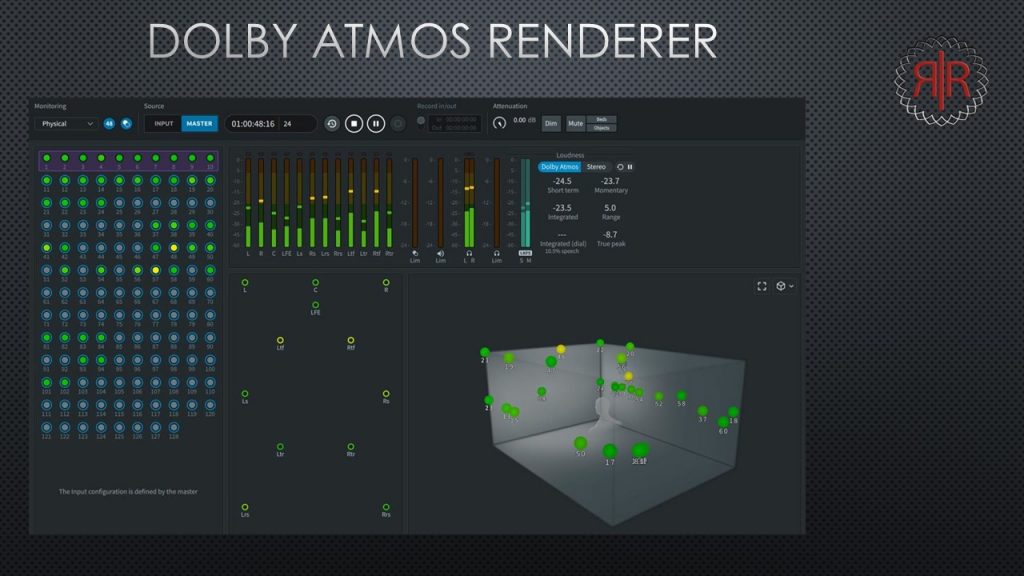

Angus then outlined the equipment used for Dolby Atmos content creation – covering various Digital Audio Workstation (DAW) systems, computers, and software such as the Dolby Atmos Production Suite, and Dolby Atmos Mastering Suite.

He described the differences between the Dolby Atmos Mastering Suite and the Dolby Atmos Production Suite in terms of the delivery specification and workflow. He then described the Music Panner’s use for music production, as well as the Binaural Settings plugin which offers control of the Dolby Atmos Renderer and Binaural Renderer from within the DAW.

He described the current popularity of music listening via headphones and earbuds, as driving the necessity to consider the binaural element of surround music.

He described HRTF (Head Related Transfer Function) and recounted his recent participation in a Dolby beta program for a phone app that uses images of your actual head to develop a unique HRTF model for each individual.

He next described the operation of the Renderer, demonstrating placing the audio objects in 3D space.

Following this, he introduced us to the Arvus H2-4D, as a useful piece of hardware that takes up to 16 channels of HDMI audio, and outputs it to a range of standard audio interfaces like AES3, Dante, Ravenna, Balanced Analog, and HDMI-Audio. This permits the auditioning/monitoring of existing distributed streamed surround content without needing super-expensive hardware.

Angus then covered the evolution of music listening from physical media, through digital downloads, and finally to streaming. He cited music industry (RIAA) statistics that the US streaming market has moved from 10% to 80% of all music listening in the decade from 2010 to 2020.

He then listed the streaming platforms supporting Dolby Atmos – Apple Music, Tidal, Amazon Music, Anghami (Lebanese-Arabic), Hungama (India), and Naver Vibe (Sth Korea).

He noted that, so far, Spotify – the world’s biggest streaming platform – was not offering Atmos or any other spatial content.

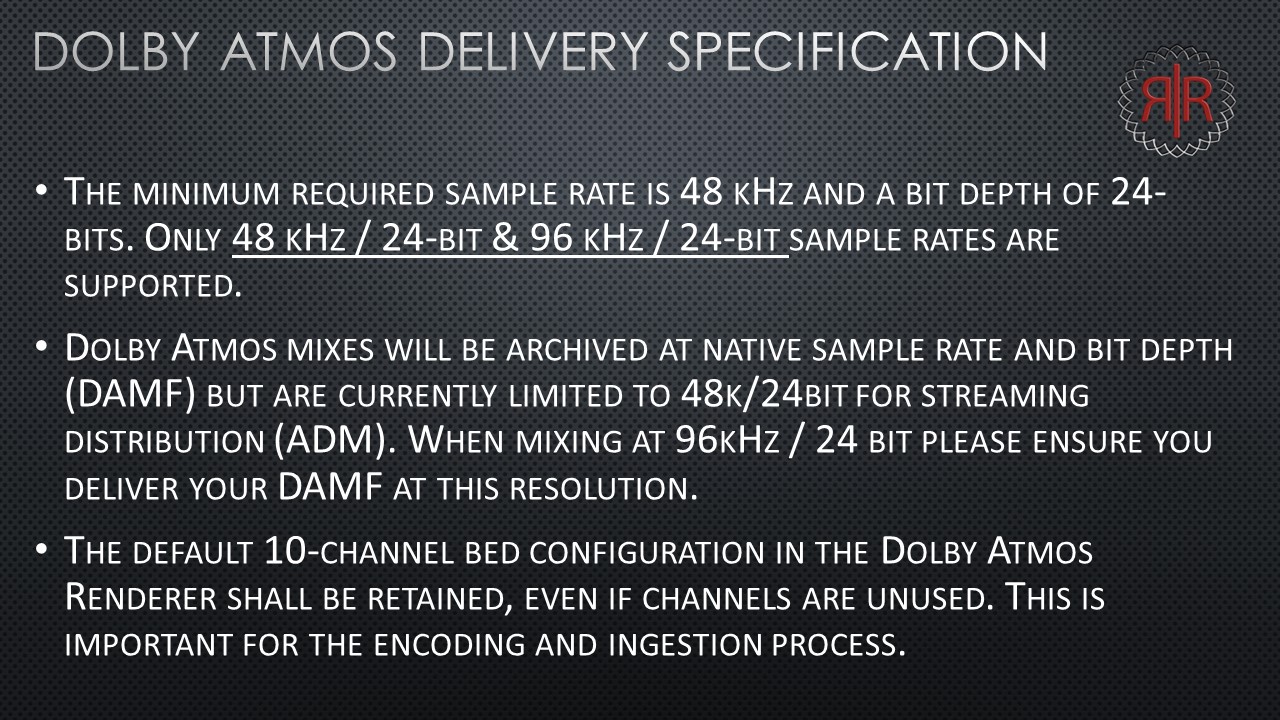

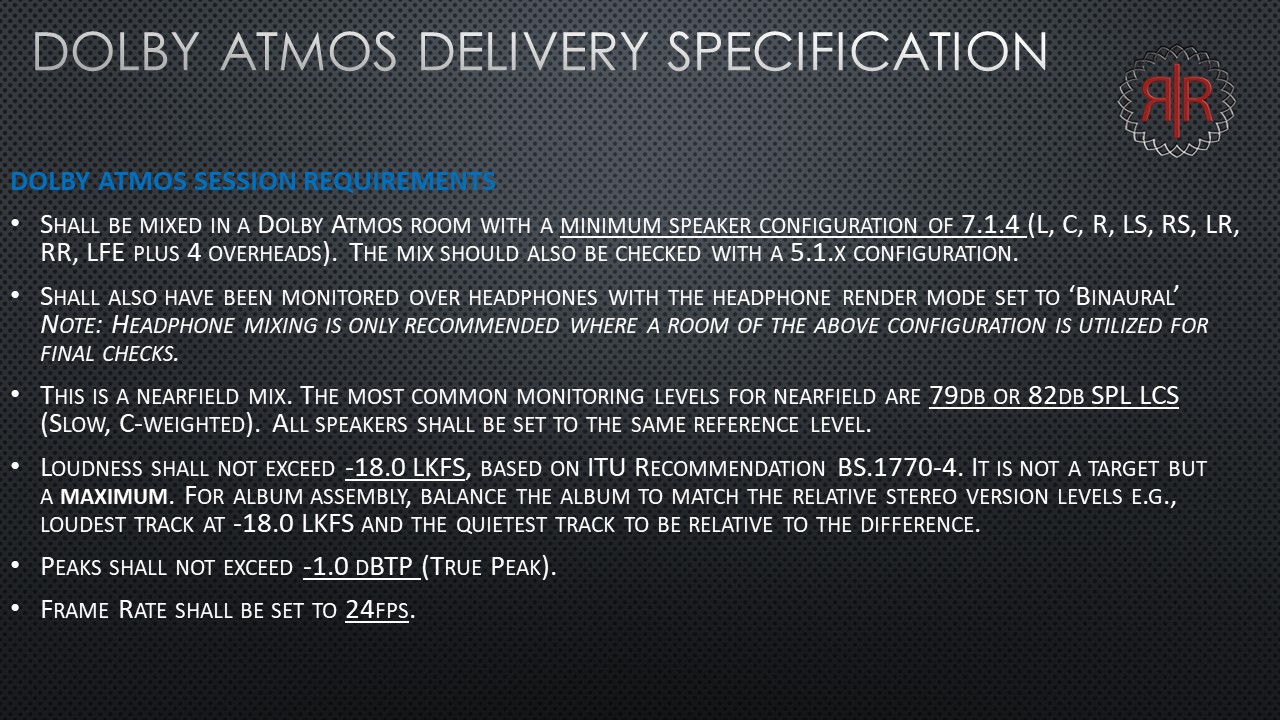

He then presented an Atmos Delivery Specification, which is a combination of recommendations from Dolby, Netflix, and the Universal Music Group (UMG). He indicated that this is what has become the standard procedure for mixing in Dolby Atmos for music.

He followed this with a list of specific recommendations for the mix from UMG.

Angus concluded his presentation by listing examples of Atmos releases in various music genres, describing varying approaches for the different genres.

In the case of synch music, he referred the audience to a Mix with the Masters video series done by Alan Myerson on his recording and mixing of the Has Zimmer music for the film Pirates of The Caribbean 5, as being a particularly useful look at the process.

He then threw the meeting open to questions.

Questions covered positional decisions for different music genres, the challenge of adding reverb to objects and having it move with the object, using multiple beds, session file exchange formats, positional metadata, microphone array (Decca tree etc) positioning. Angus cited a Netflix project Sol Levante which demonstrates the mic array positioning process.

It was a most interesting and illuminating presentation.

We thank Angus for the time and effort he put into preparing and presenting on this increasingly important topic.

A video recording of the Zoom session has been created. An edited version can be found below.

This video can be viewed directly on YouTube at: https://www.youtube.com/watch?v=ivG7BiDrGVU

A PDF version of Angus’ slides is available at:

https://www.aesmelbourne.org.au/wp-content/media/AES%20Dolby%20Atmos%20audio%20Pres.pdf

Angus’ links:

Dolby DARDT Quick Start Video Series (includes file download link).

https://professionalsupport.dolby.com/s/article/HE-DARDT-Quick-Start-Video-Series?language=en_US

Dolby Atmos Home Entertainment Studio Technical Guidelines. https://professionalsupport.dolby.com/s/article/Dolby-Atmos-Home-Entertainment-Studio-Technical-Guidelines?language=en_US

Learn to create in Dolby Vision and Dolby Atmos.

https://professionalsupport.dolby.com/s/learning?language=en_US

Dante Virtual Soundcard.

https://www.audinate.com/products/software/dante-virtual-soundcard

Dolby Atmos Content Creation.

https://professional.dolby.com/product/dolby-atmos-content-creation/

Working With DAWs.

https://professionalsupport.dolby.com/s/topic/0TO4u000000f10WGAQ/working-with-daws?language=en_US

KV2 Audio. https://www.kv2audio.com/

Sol Levante video (a Netflix subscription required):

https://opencontent.netflix.com/ Alan Myerson – on mixing Pirates 5 (Mix with the Masters Premium membership required)

-Trailer free to view-

https://mixwiththemasters.com/videos/inside-the-track-7-hans-zimmer-pirates-of-the-caribbean